'All Eyes on Rafah': How a viral campaign exposed an unfolding AI war

Over the past eight months, we have borne witness to unspeakable horrors stemming from Israel's war on Gaza.

After its most recent offensive targeting a tent camp for displaced Palestinians in Rafah on 26 May, the world saw a headless baby being held together while bodies turned to ash and fires raged on in the background. Forty-five people were killed that Sunday evening in what was likely a retaliation strike after the International Court of Justice ordered Israel to halt military operations in Rafah.

These apocalyptic scenes were a reminder that Palestinians cannot escape bombardment.

The relentless assault since 7 October has amounted to more than 36,000 Palestinians murdered - not counting those trapped under the rubble - tens of thousands injured and almost two million internally displaced as a result of Israel's strategic and deliberate violence. These scenes have circulated on social media with unprecedented volume in what has been described as "the first live-streamed genocide".

There is no shortage of content being transmitted from the heart of Gaza as a testament to the terror unfolding in real-time. Indeed, an unsettling, if predictable, feature in this influencer age is how the brave Palestinian voices documenting and transmitting to the globe have become familiar household names to us all.

New MEE newsletter: Jerusalem Dispatch

Sign up to get the latest insights and analysis on Israel-Palestine, alongside Turkey Unpacked and other MEE newsletters

The sheer volume of raw, horrific footage circulating on social media means that mainstream outlets have long been displaced as a primary source of news in favour of TikTok, Instagram and X.

We follow the feeds of young people on the ground who not only share imagery but also a window into their lives and personal experiences. We share in their fear and their grief, and we worry for their safety if they have not posted that they survived the night.

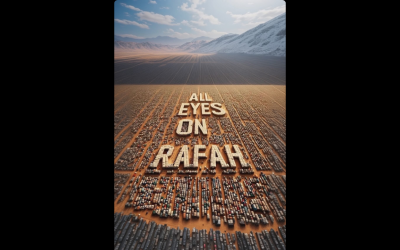

In the context of all this, we observed with great interest the speed with which the now-ubiquitous "All Eyes on Rafah" Instagram template became viral.

What are we ameliorating when posting an AI-generated image instead of a real-life one? What exactly are we protecting?

The image, generated by artificial intelligence (AI), was composed of white tents overlaid on an endless expanse of neatly assembled tents of different shades and overshadowed by snowy white mountains.

The words displayed in bold capitals and the neat image seem incongruous with one another; the reality on the ground in Rafah is entirely different to what is being shared. But perhaps that is what made it so easy to share.

It may have been that the complete sanitisation and deliberate omission of graphic imagery made it an easier "share" for social media users at a time when "influencers" and celebrities have been heavily criticised for their lack of engagement on Palestine.

But if ease or comfort are deciding factors in the content we share, then a fundamental question we must ask ourselves is: what are we ameliorating when posting this image instead of a real-life one? What exactly are we protecting?

Digital activism

With the onset of the war on Gaza came a notable shift in how we engage with technology and the digital space. Threats of doxxing commonly loom over students protesting the atrocities, as do concerns over professional repercussions when employers are tagged in or informed about social media posts.

In a recent case, Faiza Shaheen, a prospective candidate for Britain's Labour Party, was allegedly dropped for liking tweets supporting Boycott, Divestment and Sanctions (BDS) against Israel. Activist intimidation is real, as is its impact, and so the virality of the "Rafah" post, which has surpassed 40 million shares, may come down to the sense that there is safety in numbers.

Many critics of the AI-generated graphic seemed to liken it to other viral gestures deemed shallow and performative, such as the infamous Black profile squares and accompanying #BlackoutTuesday posts, which gained popularity during the 2020 George Floyd protests to show solidarity with Black communities against the violent injustices and exclusions they faced.

Others have suggested that the "Rafah" post is proof of art's political capabilities and its potential as a form of protest and resistance, given the strategic way in which the lack of "horror" may have also contributed to the image's massive reach by bypassing any filtering or blocking mechanisms.

But this episode prompts us to consider more deeply the role and impact of AI in online narrative-building and activism. The ease and speed with which AI content can be generated has interchangeably been hailed as a thing of fascination, amusement and even empowerment.

Type in a few keywords, and you can have at your fingertips an image (or text) that may somehow express our thoughts and expose our anxieties and prejudices at the same time.

Just as the digital space has arguably opened up a multitude of possibilities for activism, it also raises questions about the efficacy of viral online trends in achieving objectives.

In this neo-liberal age, what exactly are the full impacts of hashtags and mass "blockouts"?

While they may indeed impact their intended targets, what information about ourselves do we render to social media companies in our engagement with this activism, and are such trends potentially tools to herd, contain or pacify activism?

'AI genocide'

In response to the popularity of the "Rafah" image, numerous counter-graphics were produced, one of which depicts a Hamas fighter holding a gun and looking down at a baby, with the question "Where were your eyes on October 7?" emblazoned in capital letters.

Whatever our political leanings, it is clear that AI-generated content is emboldening our storytelling and galvanising a content-driven audience. It is simultaneously making it harder to differentiate between what is true and false as bots and fake accounts pushing ideologies are only increasing and will deepen our distrust of technology. Yet what particularly complicates this fact is that we are in a moment when citizen journalism is at its peak.

Follow Middle East Eye's live coverage of the Israel-Palestine war

We are witnessing quite literally the first AI war unfolding in real time, whether through viral AI-generated posts to fuel narratives or the use of AI surveillance and weaponry to inflict the most harm.

While opinions differ on whether the sharing of AI-generated content is acceptable, we must urgently address how AI is being used to advance warfare through surveillance and "targeted" killings.

In 2021, Israel boasted about its use of innovative technology during an incursion known as "Operation Guardian of the Walls", where 261 Palestinians were killed as Gaza became an annual laboratory for AI weaponry.

While opinions differ on the sharing of AI-generated content, we must urgently address how AI is being used to advance warfare

The ongoing reliance by the Israeli military on AI marking and tracking systems such as Lavender, 'The Gospel' and 'Where’s Daddy?’ confers terrifyingly sweeping powers to programmes authorised to kill using 'dumb bombs' (or unguided missiles) with minimal human oversight.

The use of drones and sophisticated surveillance programmes to destabilise the lives of Palestinians while preserving the lives of Israeli soldiers by avoiding a ground invasion shows how AI technologies are used to prioritise some lives over others.

In these ways, AI is being used to dehumanise, erase and threaten the lives of Palestinians, whether deliberately through weaponry used on entire populations or perhaps inadvertently through the circulation of sanitised images that conceal the horrors routinely inflicted on Palestinians.

In these urgent times, obscuring the truth through AI will only serve to cloud our vision, a paradox we must remain alert to as the volume of content at our fingertips continues to mushroom unabated.

The views expressed in this article belong to the authors and do not necessarily reflect the editorial policy of Middle East Eye.

Middle East Eye delivers independent and unrivalled coverage and analysis of the Middle East, North Africa and beyond. To learn more about republishing this content and the associated fees, please fill out this form. More about MEE can be found here.